Qwen3 235B A22B Thinking 2507

qwen/qwen3-235b-a22b-thinking-2507Qwen3 235B A22B Thinking 2507 (qwen/qwen3-235b-a22b-thinking-2507) is a qwen3_moe model from Qwen with a 262,144-token context window and 262,144 max output tokens, priced at $0.11/1M input and $0.60/1M output tokens. Available via the haimaker.ai OpenAI-compatible API.

Overview

Qwen3 235B A22b Thinking 2507 is a chat model by Qwen. It supports a 262K token context window. Supports function calling, reasoning.

Model Card

Qwen3-235B-A22B-Thinking-2507

Highlights

Over the past three months, we have continued to scale the thinking capability of Qwen3-235B-A22B, improving both the quality and depth of reasoning. We are pleased to introduce Qwen3-235B-A22B-Thinking-2507, featuring the following key enhancements:

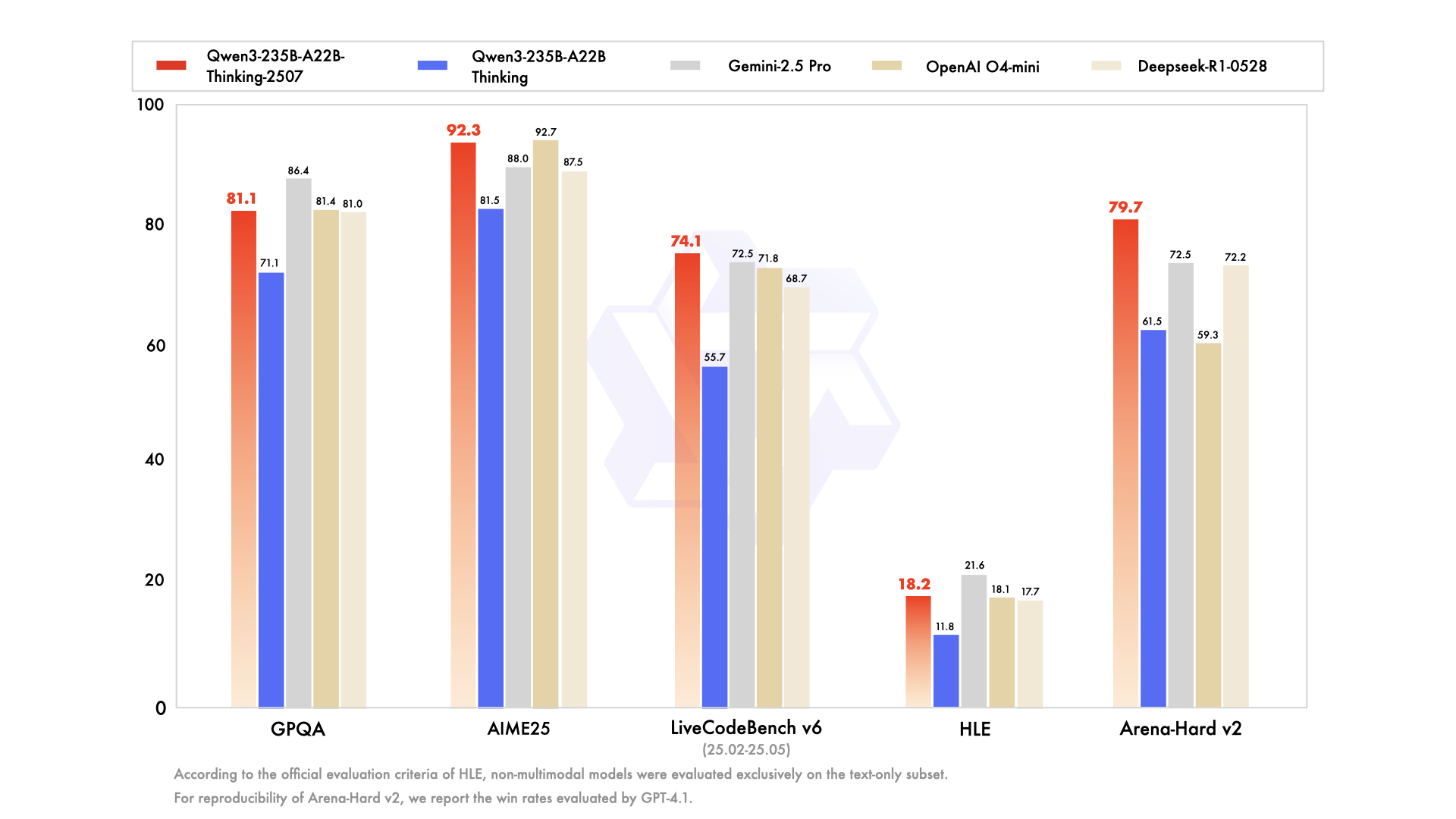

- Significantly improved performance on reasoning tasks, including logical reasoning, mathematics, science, coding, and academic benchmarks that typically require human expertise — achieving state-of-the-art results among open-source thinking models.

- Markedly better general capabilities, such as instruction following, tool usage, text generation, and alignment with human preferences.

- Enhanced 256K long-context understanding capabilities.

NOTE: This version has an increased thinking length. We strongly recommend its use in highly complex reasoning tasks.

Model Overview

Qwen3-235B-A22B-Thinking-2507 has the following features:- Type: Causal Language Models

- Training Stage: Pretraining & Post-training

- Number of Parameters: 235B in total and 22B activated

- Number of Paramaters (Non-Embedding): 234B

- Number of Layers: 94

- Number of Attention Heads (GQA): 64 for Q and 4 for KV

- Number of Experts: 128

- Number of Activated Experts: 8

- Context Length: 262,144 natively.

Additionally, to enforce model thinking, the default chat template automatically includes without an explicit opening

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our blog, GitHub, and Documentation.

Performance

| | Deepseek-R1-0528 | OpenAI O4-mini | OpenAI O3 | Gemini-2.5 Pro | Claude4 Opus Thinking | Qwen3-235B-A22B Thinking | Qwen3-235B-A22B-Thinking-2507 |

|--- | --- | --- | --- | --- | --- | --- | --- |

| Knowledge | | | | | | | |

| MMLU-Pro | 85.0 | 81.9 | 85.9 | 85.6 | - | 82.8 | 84.4 |

| MMLU-Redux | 93.4 | 92.8 | 94.9 | 94.4 | 94.6 | 92.7 | 93.8 |

| GPQA | 81.0 | 81.4 | 83.3 | 86.4 | 79.6 | 71.1 | 81.1 |

| SuperGPQA | 61.7 | 56.4 | - | 62.3 | - | 60.7 | 64.9 |

| Reasoning | | | | | | |

| AIME25 | 87.5 | 92.7 | 88.9 | 88.0 | 75.5 | 81.5 | 92.3 |

| HMMT25 | 79.4 | 66.7 | 77.5 | 82.5 | 58.3 | 62.5 | 83.9 |

| LiveBench 20241125 | 74.7 | 75.8 | 78.3 | 82.4 | 78.2 | 77.1 | 78.4 |

| HLE | 17.7# | 18.1* | 20.3 | 21.6 | 10.7 | 11.8# | 18.2# |

| Coding | | | | | | | |

| LiveCodeBench v6 (25.02-25.05) | 68.7 | 71.8 | 58.6 | 72.5 | 48.9 | 55.7 | 74.1 |

| CFEval | 2099 | 1929 | 2043 | 2001 | - | 2056 | 2134 |

| OJBench | 33.6 | 33.3 | 25.4 | 38.9 | - | 25.6 | 32.5 |

| Alignment | | | | | | | |

| IFEval | 79.1 | 92.4 | 92.1 | 90.8 | 89.7 | 83.4 | 87.8 |

| Arena-Hard v2$ | 72.2 | 59.3 | 80.8 | 72.5 | 59.1 | 61.5 | 79.7 |

| Creative Writing v3 | 86.3 | 78.8 | 87.7 | 85.9 | 83.8 | 84.6 | 86.1 |

| WritingBench | 83.2 | 78.4 | 85.3 | 83.1 | 79.1 | 80.3 | 88.3 |

| Agent | | | | | | | |

| BFCL-v3 | 63.8 | 67.2 | 72.4 | 67.2 | 61.8 | 70.8 | 71.9 |

| TAU1-Retail | 63.9 | 71.8 | 73.9 | 74.8 | - | 54.8 | 67.8 |

| TAU1-Airline | 53.5 | 49.2 | 52.0 | 52.0 | - | 26.0 | 46.0 |

| TAU2-Retail | 64.9 | 71.0 | 76.3 | 71.3 | - | 40.4 | 71.9 |

| TAU2-Airline | 60.0 | 59.0 | 70.0 | 60.0 | - | 30.0 | 58.0 |

| TAU2-Telecom | 33.3 | 42.0 | 60.5 | 37.4 | - | 21.9 | 45.6 |

| Multilingualism | | | | | | | |

| MultiIF | 63.5 | 78.0 | 80.3 | 77.8 | - | 71.9 | 80.6 |

| MMLU-ProX | 80.6 | 79.0 | 83.3 | 84.7 | - | 80.0 | 81.0 |

| INCLUDE | 79.4 | 80.8 | 86.6 | 85.1 | - | 78.7 | 81.0 |

| PolyMATH | 46.9 | 48.7 | 49.7 | 52.2 | - | 54.7 | 60.1 |

\ For OpenAI O4-mini and O3, we use a medium reasoning effort, except for scores marked with , which are generated using high reasoning effort.

\# According to the official evaluation criteria of HLE, scores marked with \# refer to models that are not multi-modal and were evaluated only on the text-only subset.

$ For reproducibility, we report the win rates evaluated by GPT-4.1.

\& For highly challenging tasks (including PolyMATH and all reasoning and coding tasks), we use an output length of 81,920 tokens. For all other tasks, we set the output length to 32,768.

Quickstart

The code of Qwen3-MoE has been in the latest Hugging Face transformers and we advise you to use the latest version of transformers.

With transformers<4.51.0, you will encounter the following error:

KeyError: 'qwen3_moe'The following contains a code snippet illustrating how to use the model generate content based on given inputs.

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "Qwen/Qwen3-235B-A22B-Thinking-2507"

load the tokenizer and the model

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map="auto"

)

prepare the model input

prompt = "Give me a short introduction to large language model."

messages = [

{"role": "user", "content": prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

)

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

conduct text completion

generated_ids = model.generate(

**model_inputs,

max_new_tokens=32768

)

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

parsing thinking content

try:

# rindex finding 151668 (</think>)

index = len(output_ids) - output_ids[::-1].index(151668)

except ValueError:

index = 0

thinking_content = tokenizer.decode(output_ids[:index], skip_special_tokens=True).strip("\n")

content = tokenizer.decode(output_ids[index:], skip_special_tokens=True).strip("\n")

print("thinking content:", thinking_content) # no opening <think> tag

print("content:", content)

For deployment, you can use sglang>=0.4.6.post1 or vllm>=0.8.5 or to create an OpenAI-compatible API endpoint:

- SGLang:

python -m sglang.launch_server --model-path Qwen/Qwen3-235B-A22B-Thinking-2507 --tp 8 --context-length 262144 --reasoning-parser deepseek-r1- vLLM:

vllm serve Qwen/Qwen3-235B-A22B-Thinking-2507 --tensor-parallel-size 8 --max-model-len 262144 --enable-reasoning --reasoning-parser deepseek_r1For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

Agentic Use

Qwen3 excels in tool calling capabilities. We recommend using Qwen-Agent to make the best use of agentic ability of Qwen3. Qwen-Agent encapsulates tool-calling templates and tool-calling parsers internally, greatly reducing coding complexity.

To define the available tools, you can use the MCP configuration file, use the integrated tool of Qwen-Agent, or integrate other tools by yourself.

from qwen_agent.agents import Assistant

Define LLM

Using Alibaba Cloud Model Studio

llm_cfg = {

'model': 'qwen3-235b-a22b-thinking-2507',

'model_type': 'qwen_dashscope',

}

Using OpenAI-compatible API endpoint. It is recommended to disable the reasoning and the tool call parsing

functionality of the deployment frameworks and let Qwen-Agent automate the related operations. For example,

VLLM_USE_MODELSCOPE=true vllm serve Qwen/Qwen3-235B-A22B-Thinking-2507 --served-model-name Qwen3-235B-A22B-Thinking-2507 --tensor-parallel-size 8 --max-model-len 262144.

#

llm_cfg = {

'model': 'Qwen3-235B-A22B-Thinking-2507',

#

# Use a custom endpoint compatible with OpenAI API:

'model_server': 'http://localhost:8000/v1', # api_base without reasoning and tool call parsing

'api_key': 'EMPTY',

'generate_cfg': {

'thought_in_content': True,

},

}

Define Tools

tools = [

{'mcpServers': { # You can specify the MCP configuration file

'time': {

'command': 'uvx',

'args': ['mcp-server-time', '--local-timezone=Asia/Shanghai']

},

"fetch": {

"command": "uvx",

"args": ["mcp-server-fetch"]

}

}

},

'code_interpreter', # Built-in tools

]

Define Agent

bot = Assistant(llm=llm_cfg, function_list=tools)

Streaming generation

messages = [{'role': 'user', 'content': 'https://qwenlm.github.io/blog/ Introduce the latest developments of Qwen'}]

for responses in bot.run(messages=messages):

pass

print(responses)Processing Ultra-Long Texts

To support ultra-long context processing (up to 1 million tokens), we integrate two key techniques:

- Dual Chunk Attention (DCA): A length extrapolation method that splits long sequences into manageable chunks while preserving global coherence.

- MInference: A sparse attention mechanism that reduces computational overhead by focusing on critical token interactions.

For full technical details, see the Qwen2.5-1M Technical Report.

How to Enable 1M Token Context

NOTE: To effectively process a 1 million token context, users will require approximately 1000 GB of total GPU memory. This accounts for model weights, KV-cache storage, and peak activation memory demands.

Step 1: Update Configuration File

Download the model and replace the content of your config.json with config_1m.json, which includes the config for length extrapolation and sparse attention.

export MODELNAME=Qwen3-235B-A22B-Thinking-2507

huggingface-cli download Qwen/${MODELNAME} --local-dir ${MODELNAME}

mv ${MODELNAME}/config.json ${MODELNAME}/config.json.bak

mv ${MODELNAME}/config_1m.json ${MODELNAME}/config.jsonStep 2: Launch Model Server

After updating the config, proceed with either vLLM or SGLang for serving the model.

Option 1: Using vLLM

To run Qwen with 1M context support:

pip install -U vllm \

--torch-backend=auto \

--extra-index-url https://wheels.vllm.ai/nightlyThen launch the server with Dual Chunk Flash Attention enabled:

VLLM_ATTENTION_BACKEND=DUAL_CHUNK_FLASH_ATTN VLLM_USE_V1=0 \

vllm serve ./Qwen3-235B-A22B-Thinking-2507 \

--tensor-parallel-size 8 \

--max-model-len 1010000 \

--enable-chunked-prefill \

--max-num-batched-tokens 131072 \

--enforce-eager \

--max-num-seqs 1 \

--gpu-memory-utilization 0.85 \

--enable-reasoning --reasoning-parser deepseek_r1##### Key Parameters

| Parameter | Purpose |

|--------|--------|

| VLLM_ATTENTION_BACKEND=DUAL_CHUNK_FLASH_ATTN | Enables the custom attention kernel for long-context efficiency |

| --max-model-len 1010000 | Sets maximum context length to ~1M tokens |

| --enable-chunked-prefill | Allows chunked prefill for very long inputs (avoids OOM) |

| --max-num-batched-tokens 131072 | Controls batch size during prefill; balances throughput and memory |

| --enforce-eager | Disables CUDA graph capture (required for dual chunk attention) |

| --max-num-seqs 1 | Limits concurrent sequences due to extreme memory usage |

| --gpu-memory-utilization 0.85 | Set the fraction of GPU memory to be used for the model executor |

Option 2: Using SGLang

First, clone and install the specialized branch:

git clone https://github.com/sgl-project/sglang.git

cd sglang

pip install -e "python[all]"Launch the server with DCA support:

python3 -m sglang.launch_server \

--model-path ./Qwen3-235B-A22B-Thinking-2507 \

--context-length 1010000 \

--mem-frac 0.75 \

--attention-backend dual_chunk_flash_attn \

--tp 8 \

--chunked-prefill-size 131072 \

--reasoning-parser deepseek-r1##### Key Parameters

| Parameter | Purpose |

|---------|--------|

| --attention-backend dual_chunk_flash_attn | Activates Dual Chunk Flash Attention |

| --context-length 1010000 | Defines max input length |

| --mem-frac 0.75 | The fraction of the memory used for static allocation (model weights and KV cache memory pool). Use a smaller value if you see out-of-memory errors. |

| --tp 8 | Tensor parallelism size (matches model sharding) |

| --chunked-prefill-size 131072 | Prefill chunk size for handling long inputs without OOM |

Troubleshooting:

The VRAM reserved for the KV cache is insufficient.

- vLLM: Consider reducing the `

max_model_lenor increasing thetensor_parallel_sizeandgpu_memory_utilization. Alternatively, you can reducemax_num_batched_tokens, although this may significantly slow down inference.

- SGLang: Consider reducing the

context-lengthor increasing thetpandmem-frac. Alternatively, you can reducechunked-prefill-size, although this may significantly slow down inference.

The VRAM reserved for activation weights is insufficient. You can try lowering gpu_memory_utilization or mem-frac, but be aware that this might reduce the VRAM available for the KV cache.

The input is too lengthy. Consider using a shorter sequence or increasing the max_model_len or context-length.

Long-Context Performance

We test the model on an 1M version of the RULER benchmark.

| Model Name | Acc avg | 4k | 8k | 16k | 32k | 64k | 96k | 128k | 192k | 256k | 384k | 512k | 640k | 768k | 896k | 1000k |

|---------------------------------------------|---------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|-------|

| Qwen3-235B-A22B (Thinking) | 82.9 | 97.3 | 95.9 | 95.3 | 88.7 | 91.7 | 91.5 | 87.9 | 85.4 | 78.4 | 75.6 | 73.7 | 73.6 | 70.6 | 69.9 | 67.6 |

| Qwen3-235B-A22B-Thinking-2507 (Full Attention) | 95.4 | 99.6 | 100.0| 99.5 | 99.6 | 99.1 | 100.0| 98.8 | 98.1 | 96.1 | 95.2 | 90.0 | 91.7 | 89.7 | 87.9 | 85.9 |

| Qwen3-235B-A22B-Thinking-2507 (Sparse Attention) | 95.5 | 100.0| 100.0| 100.0| 100.0| 98.6 | 99.5 | 98.8 | 98.1 | 95.4 | 93.0 | 90.7 | 91.9 | 91.7 | 87.8 | 86.6 |

- All models are evaluated with Dual Chunk Attention enabled.

- Since the evaluation is time-consuming, we use 260 samples for each length (13 sub-tasks, 20 samples for each).

- To avoid overly verbose reasoning, we set the thinking budget to 8,192 tokens.

Best Practices

To achieve optimal performance, we recommend the following settings:

- We suggest using Temperature=0.6

,TopP=0.95,TopK=20, andMinP=0.

- For supported frameworks, you can adjust the presence_penalty

parameter between 0 and 2 to reduce endless repetitions. However, using a higher value may occasionally result in language mixing and a slight decrease in model performance.

- Math Problems: Include "Please reason step by step, and put your final answer within \boxed{}." in the prompt.

- Multiple-Choice Questions: Add the following JSON structure to the prompt to standardize responses: "Please show your choice in the answer

field with only the choice letter, e.g.,"answer": "C"`."

Citation

If you find our work helpful, feel free to give us a cite.

@misc{qwen3technicalreport,

title={Qwen3 Technical Report},

author={Qwen Team},

year={2025},

eprint={2505.09388},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2505.09388},

}Features & Capabilities

| Mode | chat |

| Context Window | 262,144 tokens |

| Max Output | 262,144 tokens |

| Function Calling | Supported |

| Vision | - |

| Reasoning | Supported |

| Web Search | - |

| Url Context | - |

Technical Details

| Architecture | Qwen3MoeForCausalLM |

| Model Type | qwen3_moe |

| Library | transformers |

API Usage

from openai import OpenAI

client = OpenAI(

base_url="https://api.haimaker.ai/v1",

api_key="YOUR_API_KEY",

)

response = client.chat.completions.create(

model="qwen/qwen3-235b-a22b-thinking-2507",

messages=[

{"role": "user", "content": "Hello, how are you?"}

],

)

print(response.choices[0].message.content)Frequently Asked Questions

What is the context window of Qwen3 235B A22B Thinking 2507?

Qwen3 235B A22B Thinking 2507 (qwen/qwen3-235b-a22b-thinking-2507) has a 262,144-token context window and supports up to 262,144 output tokens per request.

How much does Qwen3 235B A22B Thinking 2507 cost?

Qwen3 235B A22B Thinking 2507 is priced at $0.11 per 1M input tokens and $0.60 per 1M output tokens when accessed via the haimaker.ai OpenAI-compatible API.

What features does Qwen3 235B A22B Thinking 2507 support?

Qwen3 235B A22B Thinking 2507 supports function calling, reasoning.

How do I use Qwen3 235B A22B Thinking 2507 via API?

Send requests to https://api.haimaker.ai/v1/chat/completions with model "qwen/qwen3-235b-a22b-thinking-2507" using any OpenAI-compatible SDK. Authentication uses a Bearer API key from https://app.haimaker.ai.

Use Qwen3 235B A22B Thinking 2507 with the haimaker API

OpenAI-compatible endpoint. Start building in minutes.